AMD’s MCM Design Cost Savings Allow Aggressive Pricing

Samuel Wan / 7 years ago

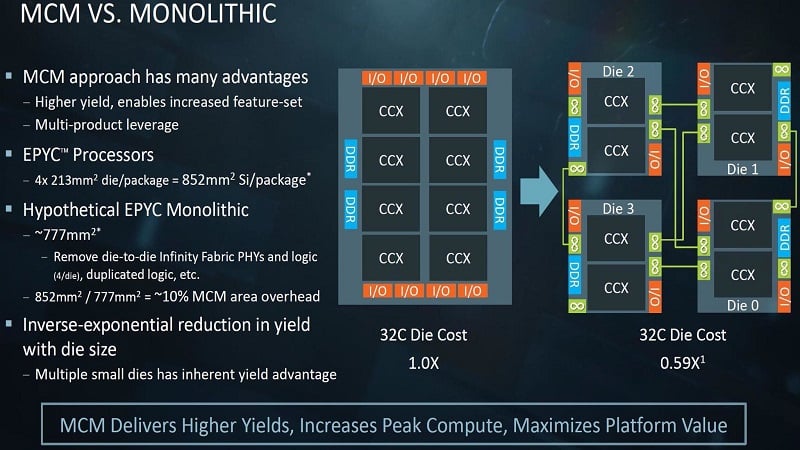

As the underdog, AMD has to be more aggressive than Intel to seize marketshare. At the same time, Intel enjoys greater economies of scale and can charge more as the dominant brand. As a result, AMD has turned to innovative ways to undercut their giant competitor. For their new Summit Ridge designs, AMD turned to a MCM architecture. As it turns out, this leads to significant cost savings.

For Ryzen, Threadripper, and EPYC, AMD is using a common 4 core Summit Ridge design. This is made up of 2 4 core CCX units on a single die making up 8 cores. To create Threadripper and EPYC CPUs, AMD stitches together 2 or 4 modules together on a MCM package. These are connected using the high-speed Infinity Fabric interconnect. As a result, these larger CPUs are somewhat glued together, albeit designed to do so.

AMD MCM Cuts Cost by 41%

According to AMD, the move to a MCM design is critical to their pricing strategy. By using a common silicon design, AMD is able to reap greater economies of scale. The top 5% percentile get used in Threadripper with even better chips used for EPYC. Regular Ryzen gets lower end chips and defective ones are harvested for the low-end Ryzen chips. This means AMD can get better effective yields and make the most out of their silicon.

Due to the MCM design, AMD’s 4 module 32 core CPU costs just 0.59X that of a monolithic 32 core design. This 41% cost saving is getting passed onto the consumer in the form of aggressive pricing. The remaining question is how much performance is being lost with the MCM design. It will also be interesting to see how Intel will react and evolve to meet this resurgent AMD.