Facebook Rolling Out AI for Detecting Suicidal Posts

Ron Perillo / 6 years ago

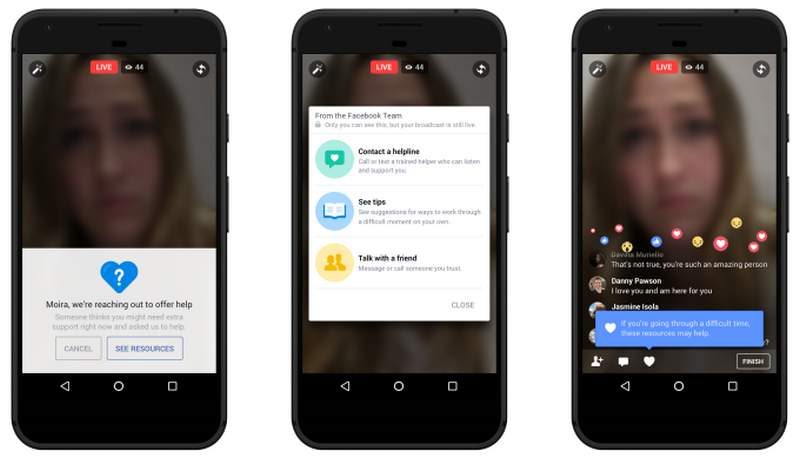

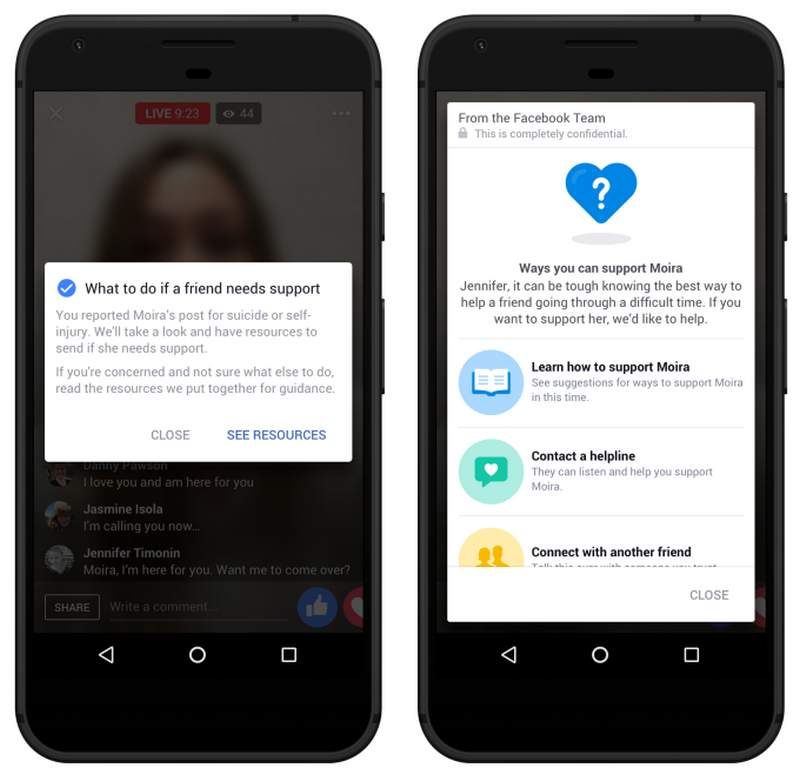

Facebook has a new feature in their disposal. This time however, it is for something very serious as it has real lives at stake. The social media giant is rolling out a new artificial intelligence which will detect suicidal posts on the platform. The goal is to find out these posts before they are even seen by users. The AI searches for specific suicidal thought patterns, and when it deems necessary to intervene, alerts the user at risk and directs them to mental health resources. In some cases, it may even contact their people on their friends list or first-responders to intervene.

AI Feature Rolling Out Worldwide

This feature initially is for US users only. However, now it will be scouring various types of content worldwide. The only exception is the European Union where their privacy laws prohibit the use of such technology. Aside from notifying users, the AI will also sort and prioritize the users in various risk categories. Those who are too risky are given top priority if urgent intervention by moderators is necessary.

The social media platform is also dedicating more human personnel with local language resources at their disposal. These moderators have been training to deal with cases and are partnering up with groups such as Save.org and the National Suicide Prevention Lifeline.

“This is about shaving off minutes at every single step of the process, especially in Facebook Live” according to VP of product management Guy Rosen. Just over the past month of testing, Facebook has initiated over 100 “wellness checks” with first-responders visiting affected users. “There have been cases where the first responder has arrived and the person is still broadcasting.”

There are of course, those who have concerns about the creepiness of having an AI peer into people’s lives. However, Facebook Security officer Alex Stamos assures users that this is actually a good thing. Moreover, he says that they are taking responsible use of AI seriously.

https://twitter.com/alexstamos/status/935184558797889536