MIT Releases Deepfake of ‘Nixon’ Announcing Apollo 11 Disaster

Mike Sanders / 4 years ago

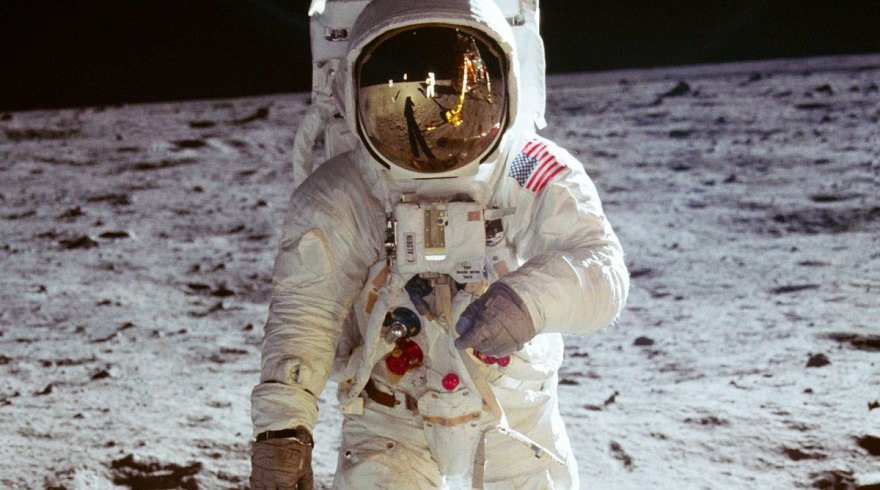

It is certainly nothing new or unusual in politics that if a major event is happening, various speeches may be prepared and/or written in advance (based on numerous potential scenarios) so that an immediate response can be given to what actually happened. One such known instance was when the Apollo 11 landed on the Moon. While it did (fortunately) turn out to be a highly successful mission, a speech was known to exist that was to be read by (the then) President, Richard Nixon, in case it ended in disaster.

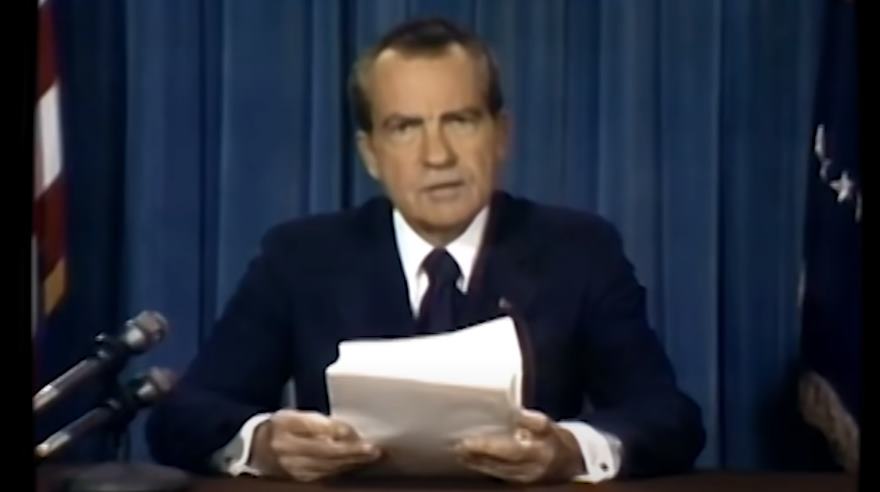

Well, following a video released by MIT, we can now (for the first time) see what that speech would’ve been/looked like had it ever been necessary for it to be delivered.

MIT Releases Nixon DeepFake Video

Now, just in case it needed to be said, this is not really President Nixon reading this speech. The Apollo 11 was a successful mission and was able to land on the moon and return without too many difficulties (well, unless you’re one of those (and I choose my words carefully here) boneheads who think the landing itself was fake).

What MIT has done here is to create what the speech would have looked like had a disaster occurred. With them using AI software to replicate his facial features, lip movement, and, of course, his rather distinctive voice, it does, on the surface, look disturbingly genuine!

That is, however, entirely the point. MIT has created this video largely to highlight the potential dangers that ‘DeepFakes‘ can represent.

What Do We Think?

For me, there are ways you can tell it’s a fake, but primarily its mostly through a rather bizarre (and I’ll admit, obscure) manner. Put simply, President Nixon very rarely directly read from written speeches. Being well-known for attempting to memorize his delivery, he would usually keep it flat on the desk, only occasionally flicking his eyes down for reference.

That being said though, it does kind of highlight the point MIT is trying to make here. It’s a distinction that only someone who knew a lot about Nixon would spot. It’s so subtle that, for the majority of people, this video would appear to be entirely legitimate. It is almost so subtle that it makes me wonder if it was actually something of a semi-deliberate error. They are, after all, very clever people at MIT.

What do you think though? Are you impressed with this deepfake? Do you think they represent a genuine issue? – Let us know in the comments!