NVIDIA Announces DGX-2 2-PetaFLOP Deep Learning System

Bohs Hansen / 6 years ago

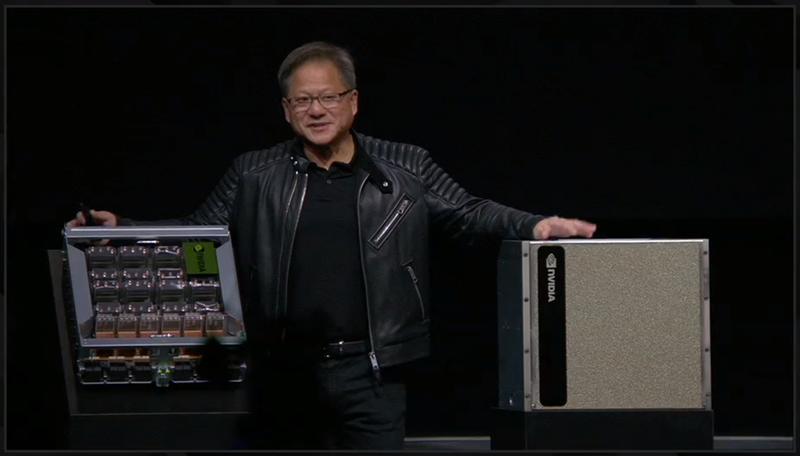

The new NVIDIA Quadro GV100 is a seriously impressive workstation graphics card, but that wasn’t the true highlight of the GTC18 keynote by CEO Jensen Huang. The DGX2 took all the glory, at least if you ask me. This isn’t just some new system, it’s THE new system and the world’s most powerful AI system. Or, the world’s largest GPU!

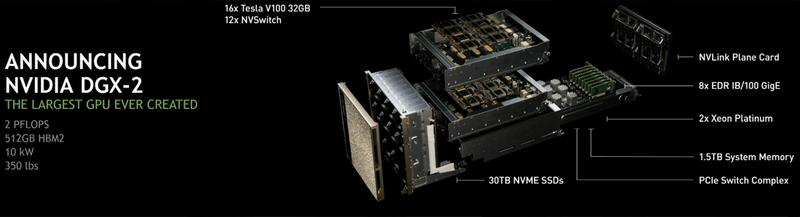

The whole thing is built around the new Tesla V100 32GB GPU and the NVSwitch for an unprecedented cost vs performance ratio. Not only that, it delivers a 10x performance boost on deep learning workloads compared to the previous generation just six months ago.

NVIDIA DGX-2

While Jensen Huang called it the world’s largest GPU in the keynote, it actually is a cluster of GPUs, interconnected by the NVSwitch technology. However, due to the technology behind it, it works as if it were a single entity.

The GPU is actually a cluster with 81920 CUDA Cores in total and up to 2K TFLOPs with Tensor Core computing. It is composed of 16 (sixteen) Tesla V100 32GB GPUs, which are connected by NVSwitch. The system has 512 GB of HBM2 memory and total bandwidth up to 14.4 TB/s. Now, if that doesn’t get you a little hard, you aren’t a real nerd.

NVSwitch Interconnect Fabric

The DGX2 system is built of several layers and with nothing but the best of what’s available. A previous problem with expending the interconnectivity has been the PCIe limitations. The bandwidth can only go so far. NVSwitch offers 5-times higher bandwidth than the best PCIe switch, allowing developers to build systems with more GPUs hyperconnected to each other.

Connecting all this with each other would have cost many thousands worth of NICs with traditional setups and that’s one of the reasons this was needed.

What else is in the NVIDIA DGX-2?

A system can’t live on GPUs alone, no matter how powerful they are. So what else is there within such a brand new NVIDIA DGX-2? Well, to start with, there are the 16 Tesla V100 32GB connected by 12 NVSwitch in two layers. These are connected with the NVLink Plane card and the whole system is opened up for connection between multiple systems by 8x EDR IB 100 Gigabit Ethernet cards. The processors used are the most powerful ones available, the Xeon Platinum, and there are two of them. There’s also 1.5TB system memory and 30TB NVMe storage. All that consumes just 10 kW, but it weighs 350 pounds.

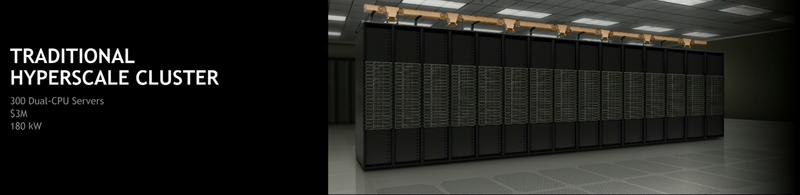

Let us Compare that a bit

To get the performance-level delivered by NVIDIA’s DGX-2, you will need a traditional hyper-scale cluster with 300 dual-CPU systems and a price of $3 million. Such a system consumes about 180kW. NVIDIA’s DGX-2 comes in at 1/60 of the space, consumes 1/18 of the power, and hold onto your hats, comes at an 8th of the cost. At just $399 thousand, it’s a bargain. Yes, not for us ordinary consumers, but for those who need it, it seems like an April’s joke. But it is true and it will be available in Q3 this year

Previously Unseen Possibilities

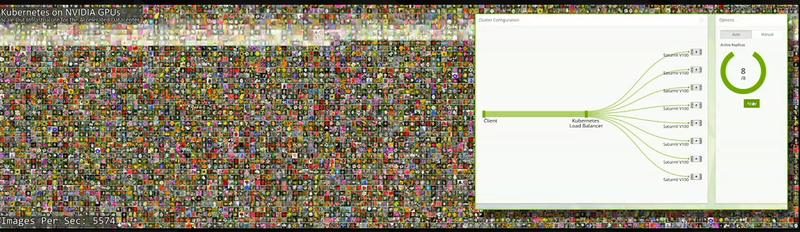

There are so many previously impossible things that are possible with this new technology, from medical research to automotive vehicles and data analysis in real time. There is a whole lot more to it that just the hardware. NVIDIA has been working closely with all major players in the fields. An awesome new thing is the Kubernetes on NVIDIA GPUs. It allows scaling of thousands of GPUs instantly, multi-region, self-healing cluster orchestration of GPUs, optimised out of the box.

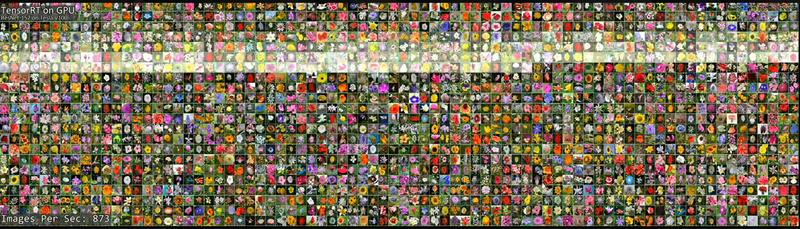

One of the demonstrations puts all of this into perspective. The best CPUs can analyse 4.3 flower images per second.

These new NVIDIA GPUs can handle close to 900.

Then, when loading it into a Kubernetes cluster, the whole thing rises to new levels around and above 6000 images per second. And that isn’t just locally either. Fail-over and cloud connections are possible too, without hick-ups.

Want to know more?

The whole keynote will be added to NVIDIA’s official YouTube channel in the event that you missed it. You can also read more about the DGX-2 on their website.