A Brief Introduction to Storage Connectors and Protocols

Bohs Hansen / 8 years ago

When it comes to storage, then there are a lot of different connector types and protocols which might confuse some. Today I will take a little look at the most common used as well as some legacy ones and the newest ones too. The most common storage connector these days is still the SATA connector although it is on its way out and being replaced by more modern M.2 and PCIe drives. That doesn’t mean that we can’t have a look at the rest too, the ones that you maybe don’t know so well.

This little guide won’t dive too much into the details of the workings, but rather keep it on a more understandable level and to serve as a reference guide. Should you want to know more about the specific workings behind the visible lines, then Wikipedia is a great source where you’ll find the detailed specs and links to whitepapers and more on each of the below-mentioned connectors and protocols.

IDE/PATA

This is probably the oldest storage connector, at least if we don’t want to go all the way back to MFM based hard disk drives.

The Parallel ATA standard has a long history with modifications, starting all the way back at the original AT Attachment interface and it later evolved into Western Digital’s original Integrated Drive Electronics (IDE) interface. There are several standards within this and they aren’t all compatible, such as Extended IDE (EIDE) and Ultra ATA (UATA). Up until not-so-long ago, it was called IDE but with the introduction of Serial-ATA (SATA), IDE got renamed to Parallel-ATA (PATA) for unification.

The connector itself has 40 pins while the cables can variate between 40 and 80 wired ribbon cables where the latter has redundancy. The original IDE standard was capable of 16 MB/s transfer speeds while newer ones slowly increased it to 33, 66, 100, and then finally 133 MB/s. Each controller port can control two drives per cable with a master and a slave. When multiple drives are attached to the same cable, the drives need to be told where they’re located with a pin, to either Master, Slave, or cable select for newer drives.

From within IDE, we also got another standard called ATAPI, but that’s better suited for another article. In short, it allows for extra commands such as media eject which brought zip drives along, among other things.

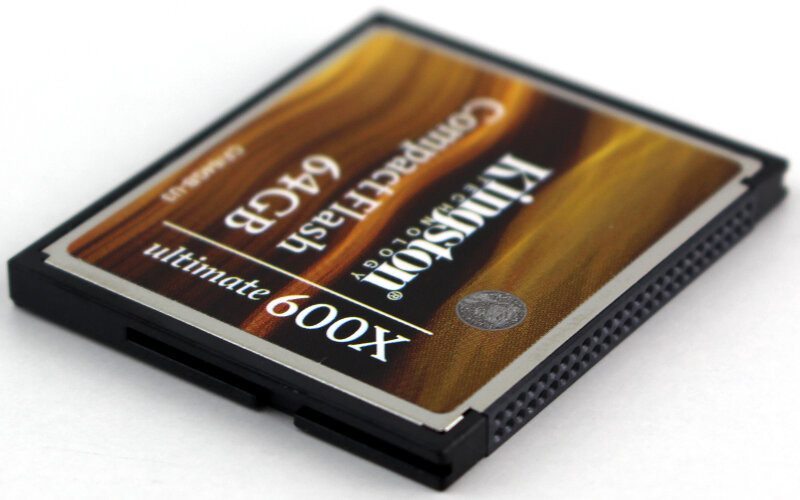

PATA remains widely used in industrial and embedded applications that use CompactFlash (CF) storage, which is designed around the legacy PATA standard, even though the new CFast standard is based on SATA.

SATA

Serial ATA, or SATA for short, is as previously mentioned the most common storage interface these days, but its days may be coming to an end soon with the next generation form factors and connectors. It will still be around for quite some time. SATA came as a replacement for PATA and added several improvements such as a reduced cable size and cost, native hot swapping, faster data transfer rates, as well as more efficient transfer through an IO queuing protocol.

When I said SATA is coming to an end that goes for the connector type more than the protocol itself – although that is coming to an end too. We’re still seeing the protocol being used with next generation connectors such as M.2 and SATA Express that can achieve higher speeds, but the SATA protocol was designed for mechanical drives and as such isn’t the best for NAND-based drives.

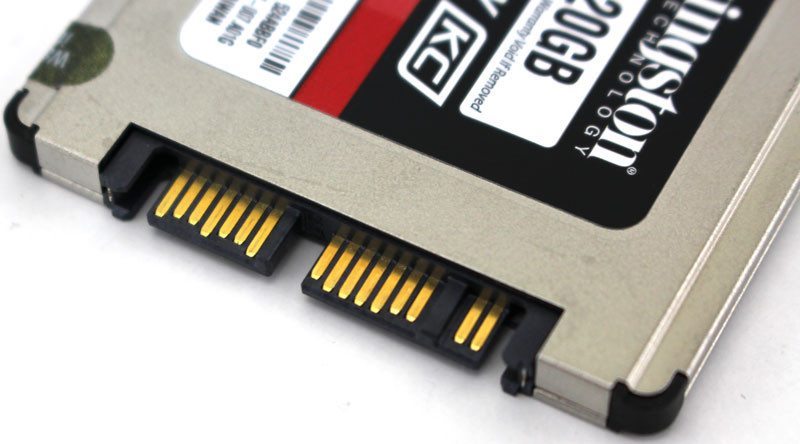

There have been 3 major revisions of the SATA connector and we’re currently on the third with minor revisions. They still use the same connector type and are both backward and forward compatible for the most part. Both 3.5-inch and 2.5-inch drives and both mechanical HDDs and NAND-based SSDs use this connector.

Micro-SATA

Micro-SATA is a spin-off from the normal SATA connector and it was intended for use in smaller form factor systems such as netbooks. The drives themselves are only 1.8-inch big and the custom connector isn’t compatible with standard SATA connectors. This was a medium stage before the real next-generation form factors such as M.2 and mSATA came around. There were very few drives with this connector and it is unlikely for most people to ever come across this type of drive.

mSATA

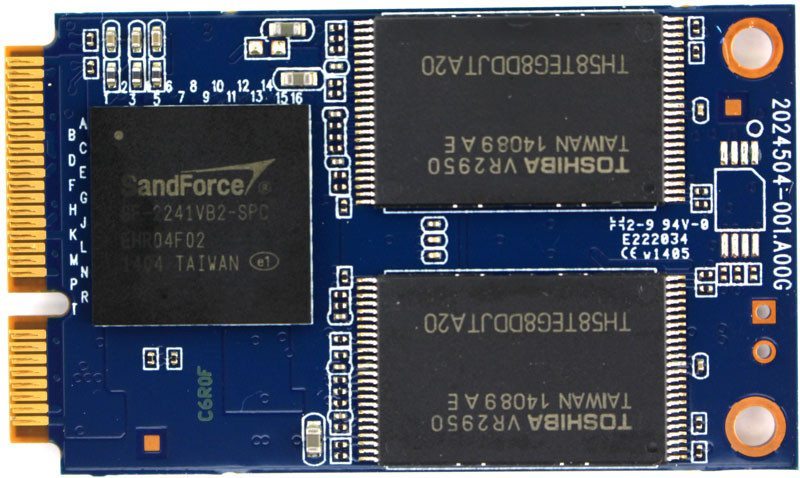

Moving fully into the flash generation of drives, the first next generation form factor was the mSATA which again is a spin-off from the normal SATA connector in combination with PCIe. It is a slimmed down version of the drives both in overall size, but they were also stripped of enclosures and come as bare PCB drives. These are still widely used in small form factor and portable system although they’re being replaced more and more my M.2 based drives.

Embedded and industrial system will however still rely on these drives for quite some time and until the physical hardware gets replaced down the line. While we got a new type of drive here, they still use the default SATA3 standard when it comes to the drive language.

M.2 (NGFF)

The true next generation form factor is the M.2 and it was even formerly known as NGFF (Next Generation Form Factor). It is a connector that has quite a few function in it which can be a little confusing to some. The connector can be used for PCI Express 3.0, Serial ATA, and USB 3.0 and as such the onboard connector can be highly versatile. However, that can create a little confusion among customers and you should make sure that you get the right one compatibility wise. For consumer systems, there are two standards: PCIe-based and AHCI-based and that’s really the difference here. The newest generation motherboards often support both and as such compatibility isn’t a big issue here, but they do have a difference in performance. While the support for AHCI ensures software-level backward compatibility with legacy SATA devices and legacy operating systems, NVM Express is designed to fully utilize the capability of high-speed PCI Express storage devices to perform many I/O operations in parallel.

Size wise there are a lot of options too, but we’ll stick to consumer sizes here which all are 22mm wide. The length can still variate altho the most common one is 80mm – these are referred to as 2280 modules. Other lengths include 30, 42, 60, and 110mm.

There are some differences in the key and sockets too. There are the B-key, the M-key, and the B & M-key drives and the difference are in where the edge is in the connector. The below-pictured drive has both. For example, M.2 modules with two notches in B and M positions use up to two PCI Express lanes and provide broader compatibility at the same time, while the M.2 modules with only one notch in the M position use up to four PCI Express lanes; both examples may also provide SATA storage devices. Similar keying applies to M.2 modules that utilize provided USB 3.0 connectivity

U.2 and SATA-Express

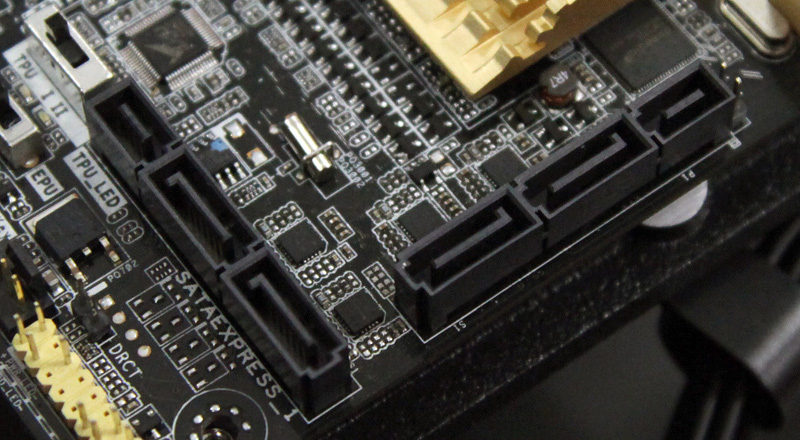

SATA Express is an interface that I don’t think will take off although a lot of motherboards on the market currently support them. They support both SATA and PCI Express storage devices and were initially standardized in the SATA 3.2 specification.

SATA Express interface supports both PCI Express and SATA storage devices by exposing two PCI Express 2.0 or 3.0 lanes and two SATA 3.0 (6 Gbit/s) ports through the same host-side SATA Express connector. Exposed PCI Express lanes provide a pure PCI Express connection between the host and storage device, with no additional layers of bus abstraction.

The SATA Express connector used on the host side is backward compatible with the standard 3.5-inch SATA data connector, while it also provides two PCI Express lanes as a pure PCI Express connection to the storage device. With this built, they support both NVMe and AHCI based drives and a speed close to 2000 MB/s. However, that’s already being surpassed by the M.2 drives.

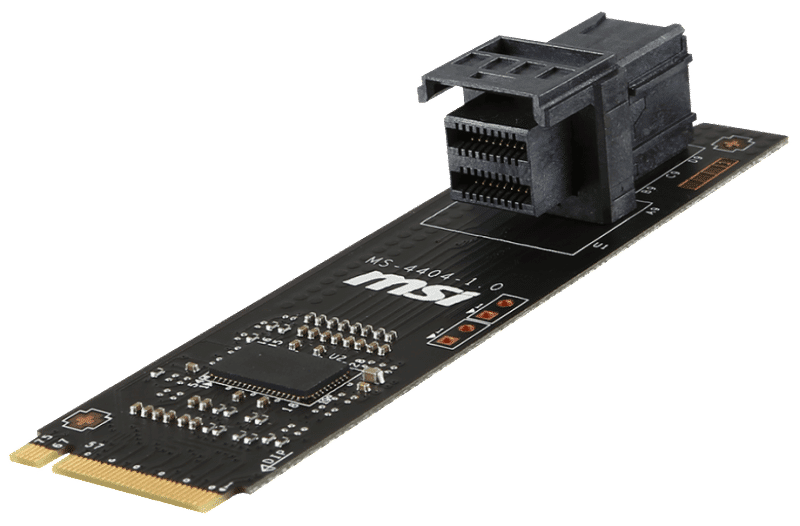

The U.2 connector, here seen on an M.2 adapter board, is mechanically identical to the SATA Express plug but provides the full four PCIe lanes for higher throughput.

PCIe

PCI Express storage drives have been on the market for some time and they started out in the enterprise sector where more input-output operations were required than normal storage drives could achieve. With the power of the PCI Express but, vendors were able to push far more performance out of the drives than they could through the limited SATA3.

There have been quite a few storage drives in this form factor where the OCZ RevoDrive definitely is one of the most familiar ones to consumers. Today we mostly see NVMe drives using this form factor next to the M.2 form factor and Intel was one of the first to combine the two for the consumer market with their impressive Intel 750 SSD. Since then, more companies have joined the bandwagon from OCZ with the RevoDrive 400, Zotac with their Sonix, and Plextor with their M8Pe series – just to name a few.

Protocols

So far I’ve covered most of the interfaces available, now it’s time to talk a little about the protocols/buses used by the drives.

AHCI

The Advanced Host Controller Interface (AHCI) is a technical standard defined by Intel that specifies the operation of SATA host bus adapters in a non-implementation-specific manner. AHCI gives software developers and hardware designers a standard method for detecting, configuring, and programming SATA/AHCI adapters and although AHCI is separate from the SATA standard, it exposes SATA’s advanced capabilities such as hot swapping and native command queuing to the host system.

One of the main advantages compared to IDE is that AHCI can handle up to 32 devices instead of just four – on top of the general improvements such as hot swapping that make our modern lives easier.

NVMe

NVM Express (NVMe) or Non-Volatile Memory Host Controller Interface Specification (NVMHCI) is a logical device interface specification for accessing non-volatile storage media attached via PCI Express bus. Non-volatile memory could be translated into flash memory to make it more understandable and it is what makes up SSD drives, memory cards, and similar devices that utilize silicon rather than spinning platters to store data. It has been designed from the ground up to capitalize on the low latency and internal parallelism of flash-based storage devices, mirroring the parallelism of contemporary CPUs.

Where AHCI could handle one command queue with 32 commands, NVMe can handle 35535 queues with 65536 commands each. It puts a lot less stress on the CPU itself and at the same time it utilizes it far better which results in the increased performance and decreased latency that we see drives using this bus.