IBM Intend to Solve Computational Scaling Using “5D Blood”

Alexander Neil / 9 years ago

One of the biggest obstacles currently facing the advancement of computing and electronic engineering is density. While current computer chips are already incredibly small, the hardware needed to power them and allow the heat generated by them to be dissipated safely, avoiding heat damage. IBM hope to solve both of those issues in one sweep, using a fluid termed “5D Blood”.

Over the years, while chips have got smaller and smaller, they have become less and less able to handle heat. A smaller surface area means less contact can be made with heatsinks, and chip heat generation is not uniform, with more used sections of the chip generating hot spots. Reduction of the chip’s size also puts the hot spots closer together and it is harder to draw heat away from them. Processing chips are also power-hungry, with most pins on a modern CPU only being used to provide the chip with a stable amount of power. These two issues combined limit how densely packed chips can be and all but forbid the stacking of chips safely for most usage scenarios.

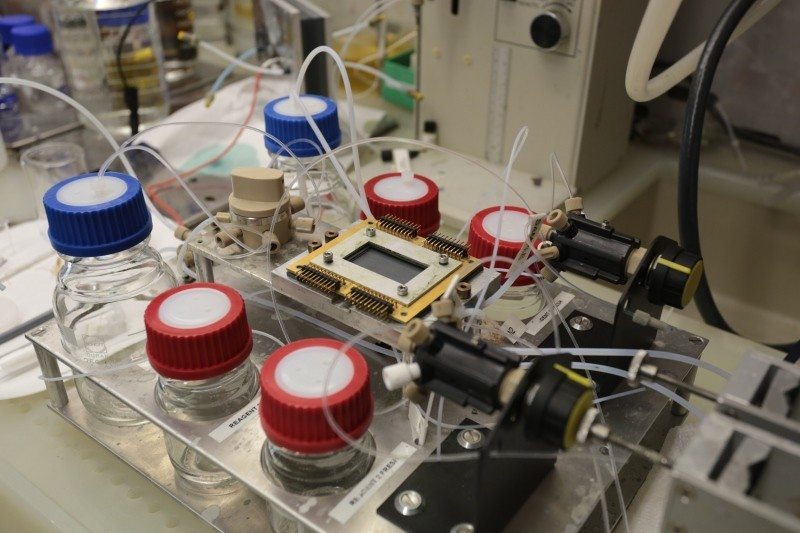

This is where the 5D Blood comes in. The dimensions are not those usually thought of, instead being part of a 5-dimensional computing model. This model involves stacking 2D chips into a 3D pile, with the extra dimensions being power and cooling. 5D Blood contributes two of these dimensions, being an electro-chemical liquid capable of both carrying an electrical charge to a chip and carry heat away from the chip. And while it is currently at a very early stage of development, IBM scientists have so far been able to deliver 10 milliwatts of charge to a chip. And with liquid cooling being a far more developed field within IBM due advancements for the use in supercomputers, the real challenge is to expand the amount of power the fluid can deliver and make it easily rechargeable. And according to IBM’s papers, the projected numbers look favorable, showing the charge-discharge cycle having efficiency of over 80% and the capability to carry around 1V.

The reason for it being called blood is its inspiration in biological processing. Mammalian brains use power on the scale of orders of magnitude less than some of the greatest supercomputers, while still fitting equivalent processing power into a far smaller package. If brain-like computers were to be developed from thousands or millions of tiny chips, they would still need a medium for power delivery and cooling to be carried out. A medium that, for us, already exists in our bodies, blood.

As computational engineering aims to become more and more efficient like natural processes, what developments to emulate nature could be next?